The Beginning

The progress in artificial intelligence has stopped. Sadly, developers and researchers are stuck between the high costs of cloud computing and the fact that standard workstations can’t handle the memory needs of current AI models. Many teams have had to give up on new ideas or spend a lot of money on cloud resources to try out simple AI experiments because of this bottleneck.

This rising anger is at its worst when developers have to work with models that have billions of parameters. Traditional PCs can only hold 24–48GB of memory at most, so writers have to rent expensive cloud instances or give up on their biggest projects. As a result? Innovation processes are getting slower, access to cutting-edge AI tools is getting harder, and the gap between those who can afford enterprise-level infrastructure and those who can’t is growing.

Here comes the Nvidia DGX Spark, which will be the world’s smallest AI machine when it ships on October 15, 2025. As a desktop-sized powerhouse with 128GB of unified memory and a performance of one petaflop, this machine claims to make AI development more accessible to everyone. Pricing at $3,999, the DGX Spark aims to fill the gap between the limited hardware available to consumers and the powerful AI computers used by businesses.

CEO Jensen Huang personally gave Elon Musk the first units at SpaceX’s Starbase facility. This shows how important this launch is for the AI research community. Big tech companies like Microsoft, Google, Meta, and Hugging Face are among the first to use the platform, which shows that the industry has a lot of faith in it.

Features of Nvidia DGX Spark

Design and Form Factor

The DGX Spark redefines what a supercomputer looks like. Measuring just 150mm × 150mm × 50.5mm and weighing only 1.2kg, this compact device resembles a mini PC more than traditional server hardware. Despite its small footprint, the system runs on just 240W of power and can be plugged into any standard electrical outlet.

Core Specifications

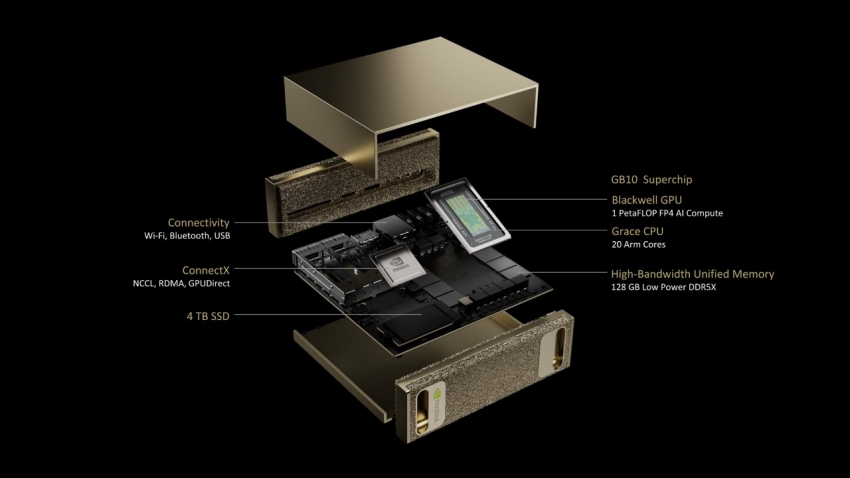

At the heart of the DGX Spark lies the GB10 Grace Blackwell Superchip, combining a 20-core ARM CPU with a Blackwell-generation GPU. The CPU features 10 high-performance Cortex-X925 cores paired with 10 efficient Cortex-A725 cores, providing balanced processing power for both general computing and specialized AI workloads.

The 128GB of LPDDR5x unified memory that is shared smoothly between the CPU and GPU is the best thing about it. It has a memory bandwidth of 273 GB/s. This unified architecture gets rid of the usual bottleneck that comes from moving data between system RAM and GPU memory. This makes it possible for big AI models to run smoothly.

The storage is a 4TB NVMe SSD that encrypts itself, so there is plenty of room for models, files, and development projects. There are four USB-C ports, Wi-Fi 7, Bluetooth 5.4, 10GbE Ethernet, and HDMI 2.1a ports for connecting up to 8K screens at 120Hz.

AI Performance Capabilities

The DGX Spark delivers impressive AI performance metrics that rival much larger systems. The device achieves one petaflop of AI performance at FP4 precision, making it capable of running inference on models with up to 200 billion parameters locally. For fine-tuning tasks, the system can handle models with up to 70 billion parameters.

Real-world benchmarks demonstrate strong performance across various model sizes. Testing with Llama 3.1 8B showed 7,991 tokens per second for prefill and 20.5 tokens per second for decode operations, scaling efficiently up to 368 tokens per second at batch size 32. Even larger models like Llama 3.1 70B ran successfully at 803 tokens per second prefill and 2.7 tokens per second decode.

The system’s ConnectX-7 networking capabilities enable connecting two DGX Spark units together, effectively doubling the available memory to handle models with up to 405 billion parameters. This clustering capability opens possibilities for collaborative research and handling even larger model architectures.

Use Cases and Real-World Applications

AI Development and Research

The DGX Spark excels in scenarios where developers need rapid iteration and experimentation. Researchers can now prototype AI algorithms locally without depending on cloud resources or waiting for data center access. This local development approach proves particularly valuable for privacy-sensitive applications in healthcare, finance, and government sectors where data cannot leave controlled environments

Academic institutions benefit significantly from the DGX Spark’s accessibility. KyungHyun Cho, professor at NYU Global Frontier Lab, notes that the system “allows access to peta-scale computing on a desktop and enables rapid prototyping and experimentation for advanced AI algorithms, even in privacy- and security-sensitive applications like healthcare”.

Robotics and Edge AI Applications

The compact form factor makes DGX Spark ideal for robotics laboratories and edge deployment scenarios. Unlike traditional server hardware, the DGX Spark can operate in lab environments, manufacturing floors, and field locations. Robotics teams can use the system for complex simulations, perception algorithm development, and autonomous system testing

Early testing at Roboflow demonstrated the system’s capabilities in computer vision applications. Their team successfully built a Waymo vehicle counting application that performed real-time analysis of traffic cameras, showcasing the system’s ability to handle demanding visual AI tasks.

Creative and Enterprise Applications

Content creators and media professionals find value in the DGX Spark’s ability to run generative AI models locally. The system can handle image generation models like FLUX.1, video processing tasks, and audio production workflows without cloud dependencies. This local processing capability ensures creative work remains private and reduces ongoing operational costs.

Enterprise applications include AI-powered analytics, document processing, and quality control systems. Manufacturing companies can deploy DGX Spark units for visual inspection systems and smart factory solutions where real-time AI processing is critical

What Are the Pros and Cons?

Independence for local AI: The biggest benefit is that AI research will no longer need to depend on the cloud. Teams save money on monthly cloud costs and keep full control over their data and models. This freedom is very important for businesses that have strict protection rules about data.

The 128GB of consistent unified memory in the unified memory architecture is a big technical plus. The DGX Spark lets you access the whole memory pool without any problems, unlike other systems where GPU memory is different from system RAM. This design makes it possible to load and run models that can’t be done on regular hardware.

Complete Software Stack: Nvidia’s full AI software stack is already installed on the system. This includes DGX OS (which is built on Ubuntu Linux), CUDA libraries, and access to NIM microservices. Frameworks like PyTorch, Jupyter, and Ollama are well-known and work right away.

Scalability: Being able to connect two units together essentially doubles the resources that can be used, giving you a way to meet your growing computing needs. This power to scale fills the gap between computing on a desktop and computing in a data centre.

Limitations: Memory Bandwidth Bottleneck: The 273 GB/s bandwidth is too slow for many AI workloads, even though the memory is very big. Benchmarks from outside sources show that memory speed, not computing power, limits performance in most real-life situations.

Gaming and general computing are limited: The DGX Spark doesn’t run Windows, but DGX OS (Ubuntu Linux), which means it can’t run games or normal PC apps. Because it is focused on one thing, it is less flexible than other workspaces.

Thoughts on Price-Performance: Since the DGX Spark costs $3,999, it has alternatives, such as making a custom PC with RTX 5090 GPUs, that may offer better raw performance for some tasks. Some experts say that RTX 5090 plus Threadripper computers are more powerful for the same price.

Power and Temperature Limits: The 240W power envelope is good for the performance it gives, but it might not be enough for long periods of time when heavy tasks are present. The small size also makes me wonder how to keep the computer cool over time when AI is constantly training.

FAQs

How about Windows? Can the DGX Spark run it?

No, the DGX Spark uses DGX OS, which is a customised version of Ubuntu Linux that works best for AI tasks. The system is meant to help develop AI, not just do general computing tasks.

What’s the difference between DGX Spark and AI computers in the cloud?

When compared to ongoing cloud costs that can add up quickly, the DGX Spark only costs $3,999 to buy. The local method usually ends up being cheaper in the long run for teams that regularly work on AI development. It also protects data privacy and doesn’t depend on the internet.

Q: Can I link more than one DGX Spark unit together?

Yes, the ConnectX-7 networking lets you link two DGX Spark computers together and work with models that have up to 405 billion parameters. However, the present rules say that clustering can only involve two units at most.

What kinds of AI models can DGX Spark run?

It can draw conclusions from models with up to 200 billion parameters and make small changes to models with up to 70 billion parameters. This includes well-known models like Llama 3.1, architectures in the style of GPT, and different vision models, based on the quantisation and optimisation methods used.

Q: Is the DGX Spark good for people who are new to AI?

The DGX Spark is powerful, but it’s meant for developers, students, and data scientists who already know a lot about AI. You need to know a lot about computers to use the Linux-based operating system and the specialised AI software stack. Cloud-based AI tools or user-friendly alternatives might be better for beginners at first.

In conclusion

The Nvidia DGX Spark is a big step towards making AI research more accessible by bringing supercomputer-level performance to desktop computers. It has a small desktop form factor, 128GB of unified memory, and one petaflop of AI performance. This makes it a unique solution for real problems that AI developers have been having because of hardware limits or expensive cloud dependencies.

The DGX Spark costs $3,999, which makes it a high-end product, but it’s a great deal for serious AI creation work. When you combine the system’s ability to run models with up to 200 billion parameters locally with Nvidia’s full software environment, you get a full development platform that can be used for everything from desktop prototyping to deploying in the data centre.

But people who want to buy should carefully think about the limited memory speed and the unique Linux-based operating system before they decide to buy. For teams that are already committed to AI research and know how to use Linux, the DGX Spark provides an unmatched mix of power, ease of use, and data privacy.

The bigger effect goes beyond just one buy. Jensen Huang told Elon Musk during the delivery that the DGX Spark continues Nvidia’s goal of “putting an AI computer in the hands of every developer to spark the next wave of breakthroughs.” This open access to AI computing power could speed up progress in many fields, from robotics and healthcare to creative apps and edge computing.